90M unique prices for 500K user groups

Situation

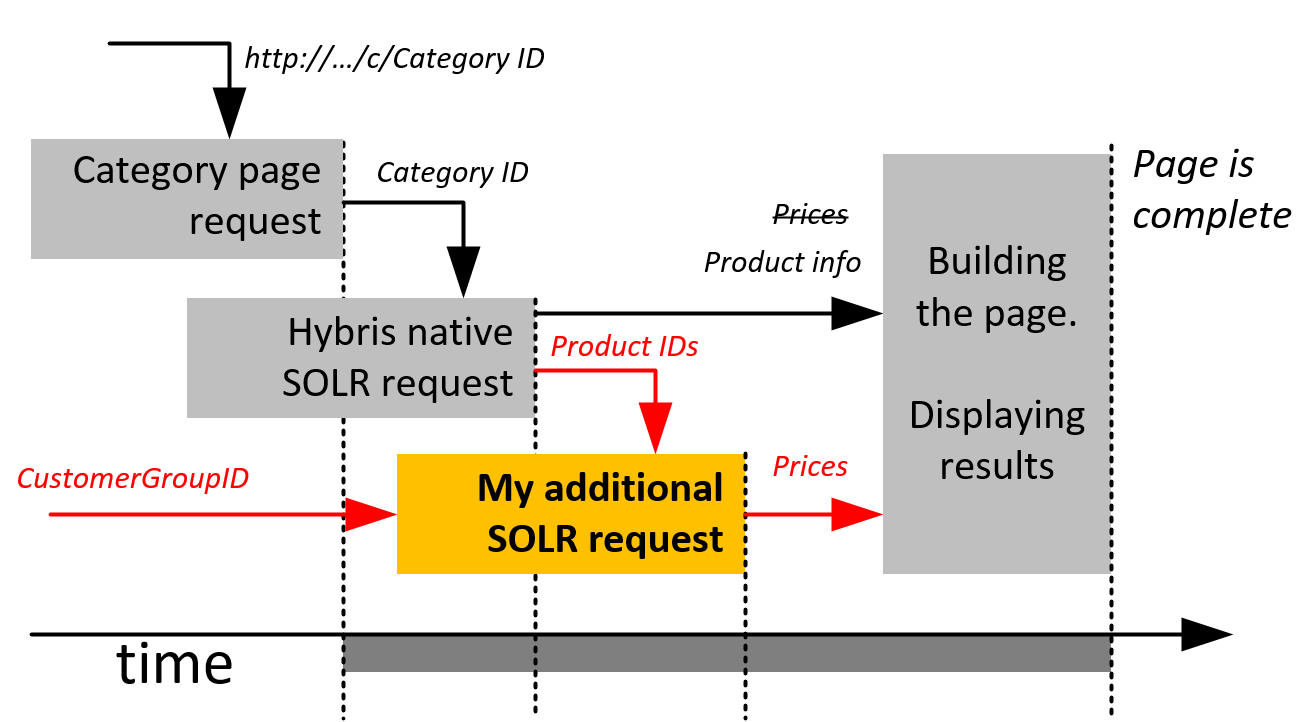

Today’s challenge is about comprehensive pricing. 500,000 customers have unique prices for 180 products. In total, 90,000,000 priced items are in the system. Having logged, the customers should see their personal price. This is an extreme case of customer group prices.Complexity

For the small number of customer groups, the solution seems trivial. Indexed products are stored in SOLR as documents, so each document will have the records like “price_customergroup_N: 1,30.” Alternatively you can use a mapping structure “price: { customer_group_N => 1,30, …}.” Each time the hybris indexes the product, all the prices for all the groups will be retrieved and stored in the index. The storefront uses this index for search results and category pages, so all you need to do is to get the right data from the document. The obvious weakness of this approach is that it is designed for small sets of customer/price groups. For larger sets, especially for huge sets, you need to reconsider the design. Large SOLR documents will significantly slow down the search and indexing.Solution

Actually, large sets of fields in SOLR is not a serious drawback. It is possible to extend hybris to restrict a set of fields for data retrieval. SOLR query has a parameter “fl” to specify a list of fields needed. This improvement is interesting, but I decided to go the other way. The technical details are below; now let’s start with the demonstration. 90M price items and 500K customers:Technical details

Hybris

- New priceRequestService.injectPrices

- Custom PriceFactory: calls priceRequestService.injectPrices(prices, customerGroupId)

- Custom SolrProductSearchFacade

SolrClient solrClient = new LBHttpSolrClient("http://localhost:8983/solr/personalprices");

SolrQuery solrSearchQuery = new SolrQuery();

if (customerUid.equals("")) { customerUid = "<ALL>"; }

solrSearchQuery.set("q", "productcode:(" + listOfcodes + ") AND (customercode:"+customerUid+")");

solrSearchQuery.set("rows", "30");

QueryResponse response = solrClient.query(solrSearchQuery);

Iterator<SolrDocument> iter2 = response.getResults().iterator();

Map<String, String> productprices = new HashMap<String, String>();

while (iter2.hasNext()) {

SolrDocument solrDocument = iter2.next();

String productCode = solrDocument.getFieldValue("productcode").toString();

String price = solrDocument.getFieldValue("price").toString();

productprices.put(productCode, price);

}

SolrQuery solrSearchQuery = new SolrQuery();

if (customerUid.equals("")) { customerUid = "<ALL>"; }

solrSearchQuery.set("q", "productcode:(" + listOfcodes + ") AND (customercode:"+customerUid+")");

solrSearchQuery.set("rows", "30");

QueryResponse response = solrClient.query(solrSearchQuery);

Iterator<SolrDocument> iter2 = response.getResults().iterator();

Map<String, String> productprices = new HashMap<String, String>();

while (iter2.hasNext()) {

SolrDocument solrDocument = iter2.next();

String productCode = solrDocument.getFieldValue("productcode").toString();

String price = solrDocument.getFieldValue("price").toString();

productprices.put(productCode, price);

}

SOLR price request

- Additional SOLR core

- Simple structure (productCode – customerGroup – currencyCode – priceValue).

Super fast update

Full price update takes 478 seconds on my laptop. For all customers, for all products. The price update could be performed using the CSV import request:http://localhost:8983/solr/personalprices/update/csv?stream.file=/hybris/solr-prices/testdata.csv&stream.contentType=text/plain;charset=utf-8

Dzmitryi Halahayeu

11 May 2017 at 05:08

Thank you for your article. Do you have any idea how to handle facet for prices for such case?

muthukarthikm

12 October 2017 at 04:57

Hi, couldn’t able to hear you voice in video and same thing am looking to do in B2B project. Can you clearly explain me how to do this and please add the audio in your video.

Please do let me know how to handle this situation you can mail me or call me to help me .

Email : muthukarthikm92@gmail.com

Phone 7406800101

Rauf Aliev

12 October 2017 at 05:20

I explained the solution in the article. Do you expect something on top of it in the video?

Balaji Mohan

16 October 2017 at 07:45

Hi Rauf!

Thanks for this article. I’m sure there are many out there looking to have customer specific pricing in a B2B scenario. I could find many questions on experts forum as well.

Someone rightly in the first comment has asked you about using facets. I’m sure even you were aware that we cannot have facet filters using this solution(or we may have to make more calls using between these two cores).

Do you have any ideas in mind to do this?

Even we realised that having multiple fields in one Product document will make the SOLR document large. This solution is achievable by tweaking the OOTB solr search query generators. But the performance impact also has to be evaluated for scalability.

Do you feel that having more number of documents(in core 2) will cause lesser performance delay than having a larger document in one core itself?

Rauf Aliev

16 October 2017 at 07:55

Hi! It really depends on stats.. how can often the prices change for the document and how many data each document has. Large documents don’t work well because internally solr recreate the document even if the part is changed. Also recalculating price ranges in the memory by processing the second core and changing ranges in the first core may work as well. The full load, processing and replace may take seconds because there is no database operations, only nosql and internal solr facet indexing.