Explaining SAP Hybris/Solr Relevance Ranking for phrase queries

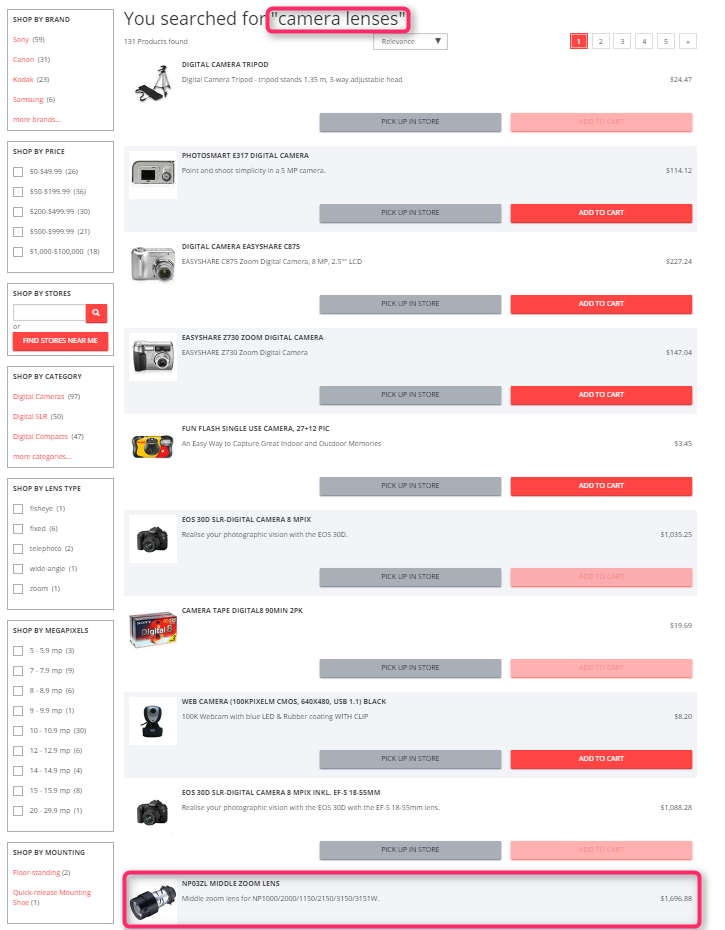

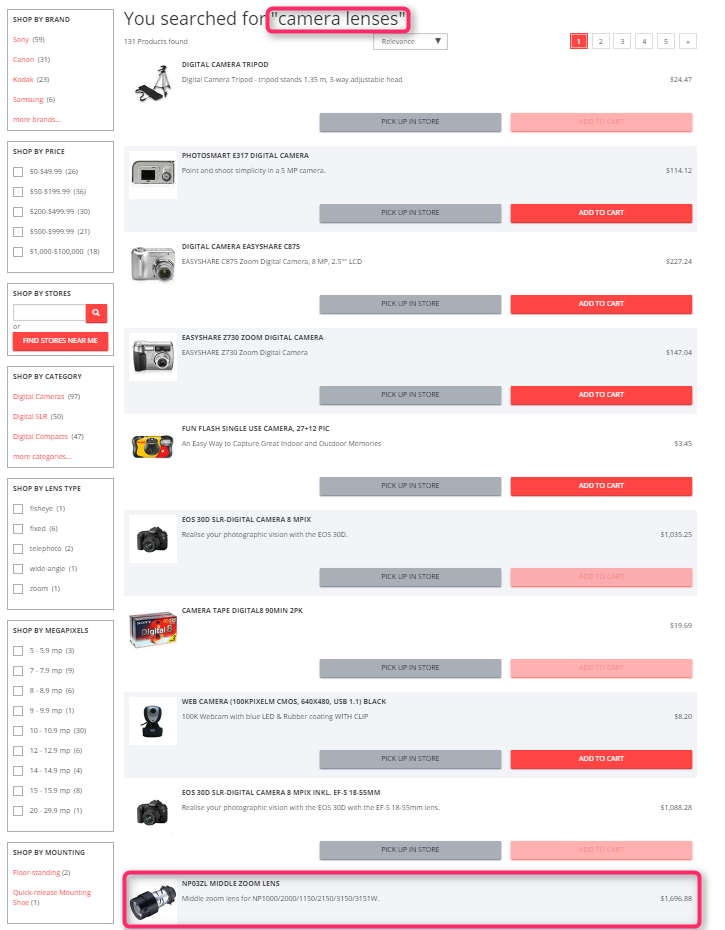

This article began with the simple question: why the search query “camera lenses” leads to tripods in the Electronics SAP Hybris demo storefront rather than the camera lenses products when we have a category named “camera lenses” and a product having “Lens” in its name. Try yourself!

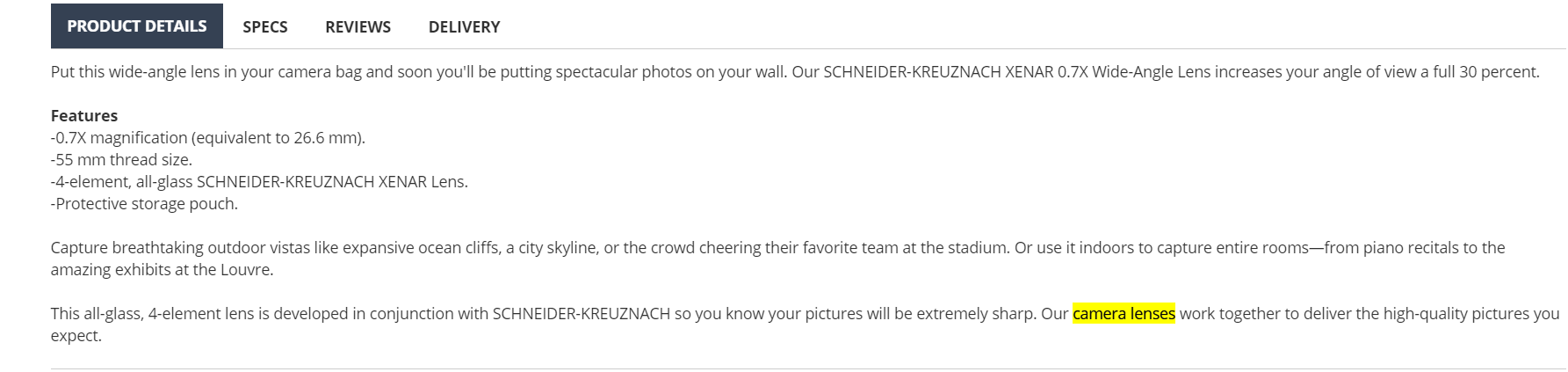

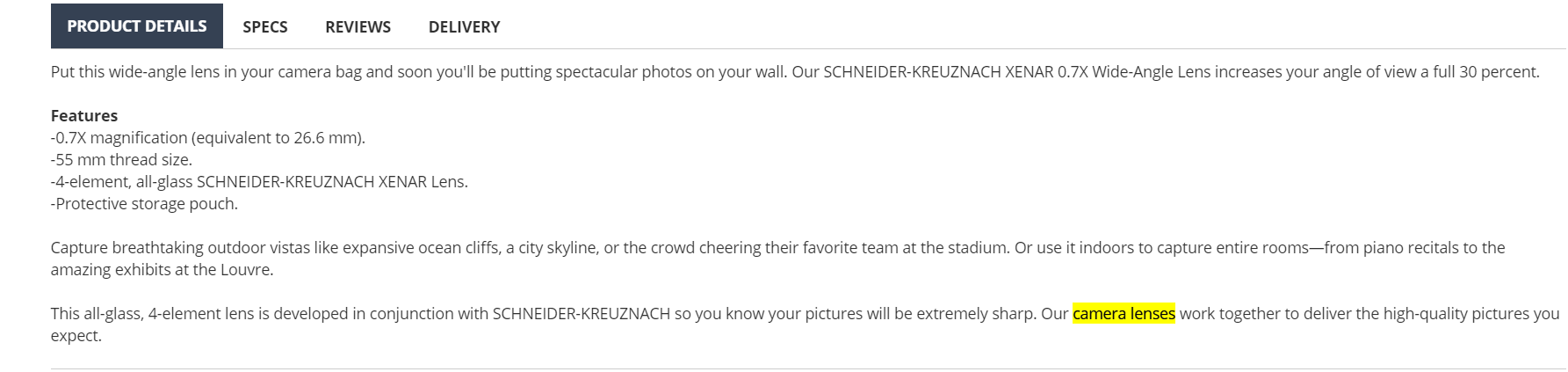

The first relevant product is at the 10th position! This is the case despite the fact that some products have the words from the query both in the description and category name, such as the following one:

The first relevant product is at the 10th position! This is the case despite the fact that some products have the words from the query both in the description and category name, such as the following one:

In addition to that, this product has “Lens” in the title that should make it even higher in the search results since we state that the search supports different word forms.

In this article, I will explain why this is happening.

In addition to that, this product has “Lens” in the title that should make it even higher in the search results since we state that the search supports different word forms.

In this article, I will explain why this is happening.

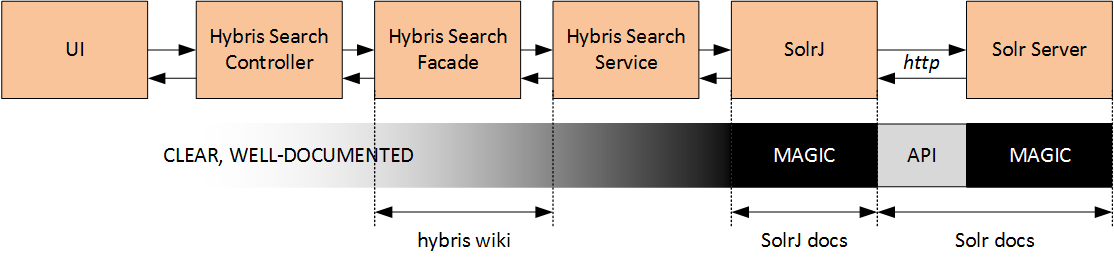

There are three interfaces between hybris and Solr:

There are three interfaces between hybris and Solr:

How does hybris find words for “CA…”? there is a Solr multivalued field called “autosuggest” (and its language versions autosuggest_) of the “text_spell” type. This type is defined as Solr standard TextField type. It contains lowercased tokens (words) from the product attributes configured as “autosuggest” indexed properties.

[!!!!] The explained below is relevant for (default) Lucene query parser, default hybris configuration, and version 6.4 of hybris Commerce. LuceneQParser is used only with OOTB Multi field Free Text Query Parser. See this article for explanations of the differences. The changes in the configuration may lead to different queries and ranking.

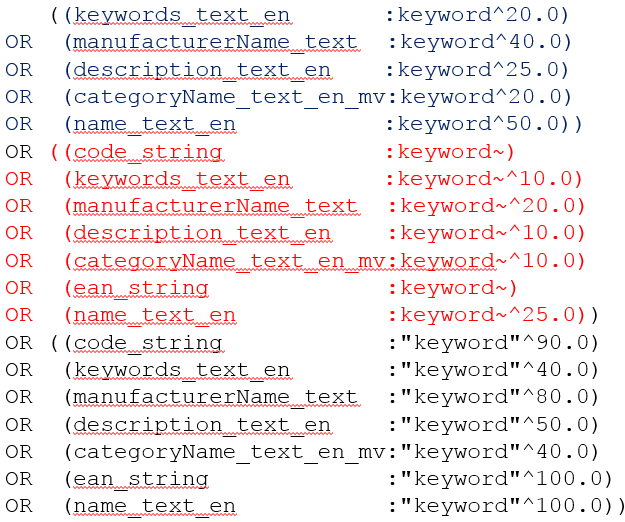

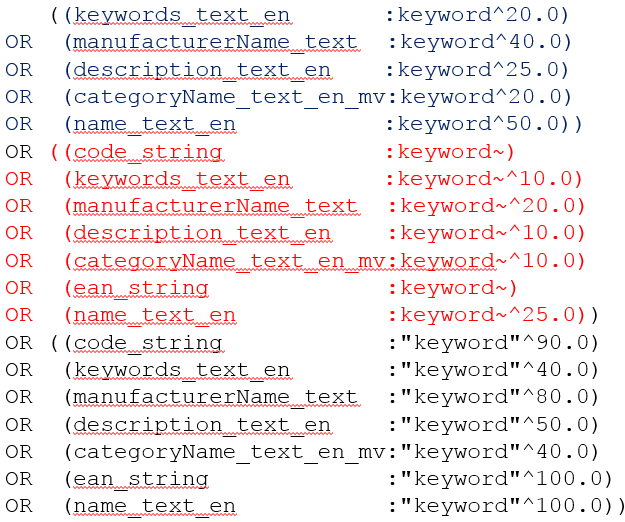

The simple request “keyword” leads to the following Solr request (or similar – it depends on the Solr configuration):

How does hybris find words for “CA…”? there is a Solr multivalued field called “autosuggest” (and its language versions autosuggest_) of the “text_spell” type. This type is defined as Solr standard TextField type. It contains lowercased tokens (words) from the product attributes configured as “autosuggest” indexed properties.

[!!!!] The explained below is relevant for (default) Lucene query parser, default hybris configuration, and version 6.4 of hybris Commerce. LuceneQParser is used only with OOTB Multi field Free Text Query Parser. See this article for explanations of the differences. The changes in the configuration may lead to different queries and ranking.

The simple request “keyword” leads to the following Solr request (or similar – it depends on the Solr configuration):

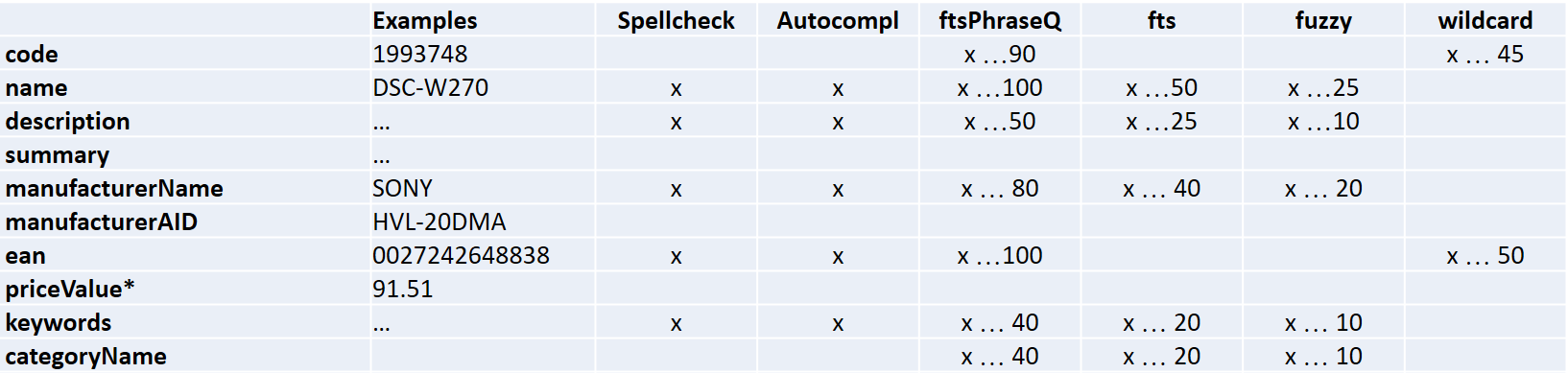

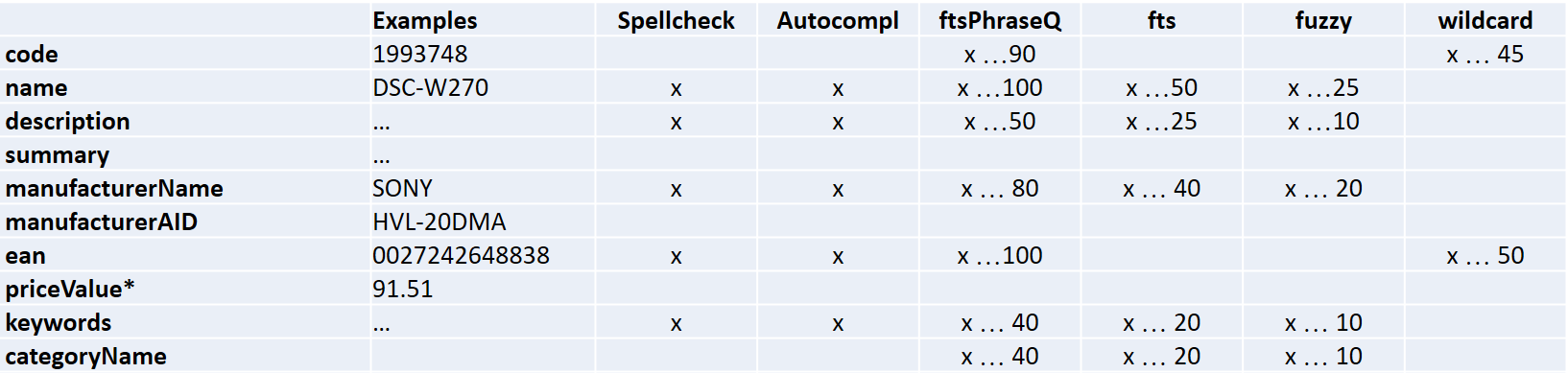

Each line represents a condition. The number at the end is a boost factor used in the relevancy calculation. Boost factor is used to increase or decrease the importance in the filter in the query. Below I will show how this value is used in the relevancy calculation for search result ranking.

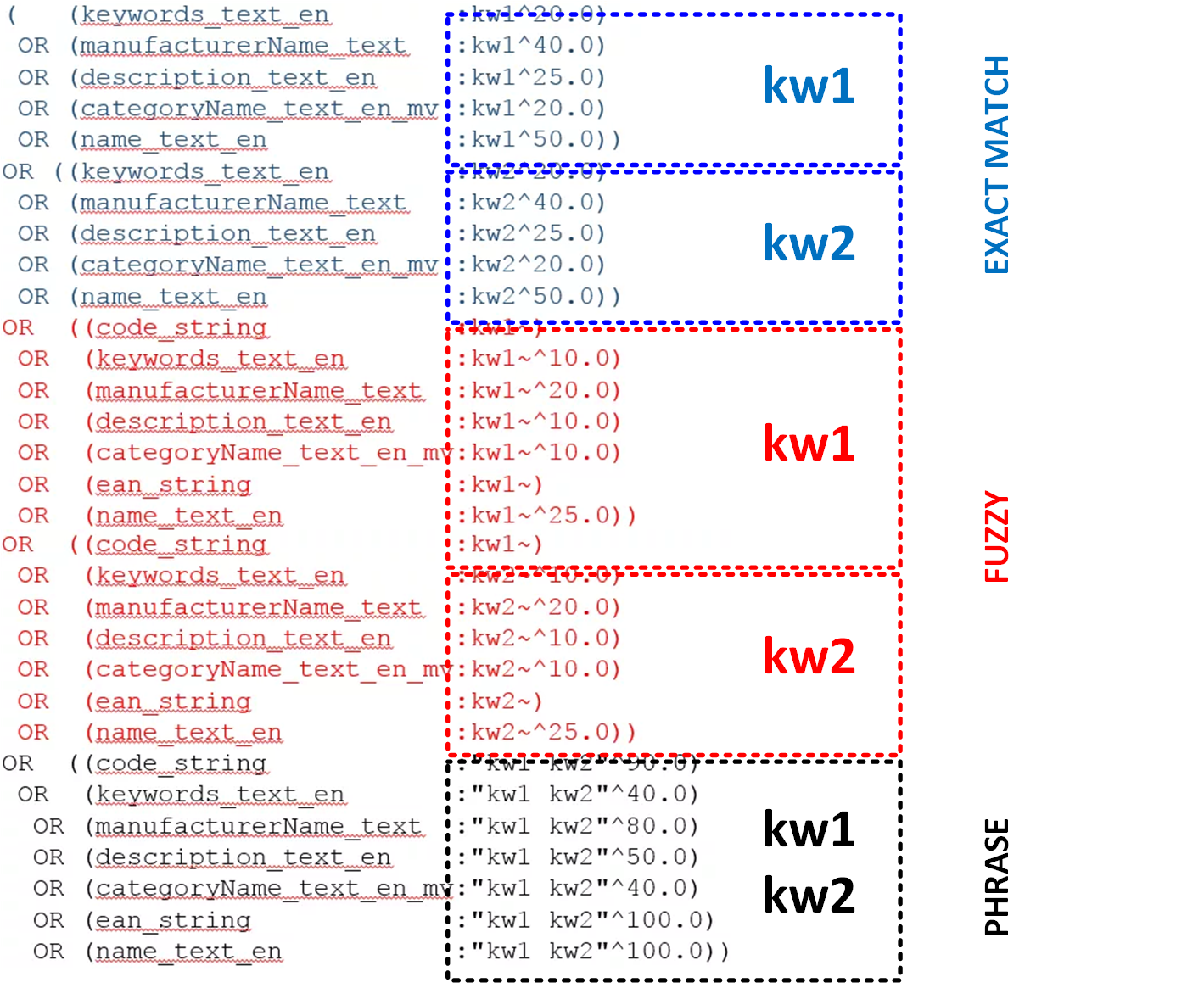

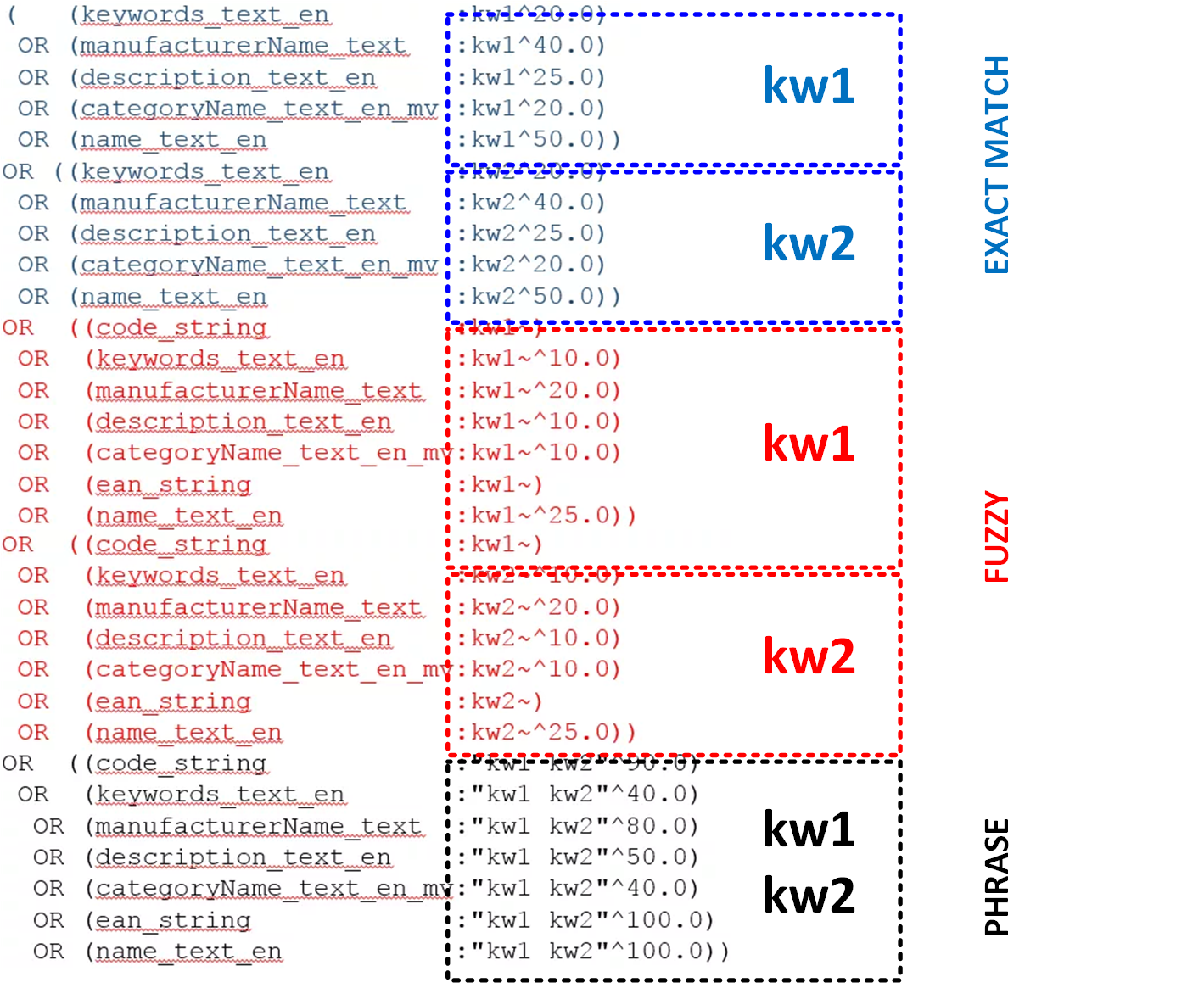

The Solr query for the sample query

will look like:

Each line represents a condition. The number at the end is a boost factor used in the relevancy calculation. Boost factor is used to increase or decrease the importance in the filter in the query. Below I will show how this value is used in the relevancy calculation for search result ranking.

The Solr query for the sample query

will look like:

For three and more words, the search request has a condition for the whole set of the words as a single phrase. For example, for the request “kw1 kw2 kw3” the “black” section contains three words, and the blue and red sections have

.

For multi-word requests, only a whole phrase will affect the relevancy:

For three and more words, the search request has a condition for the whole set of the words as a single phrase. For example, for the request “kw1 kw2 kw3” the “black” section contains three words, and the blue and red sections have

.

For multi-word requests, only a whole phrase will affect the relevancy:

This configuration says, for example, that

is involved in the phrase search, but it is not involved in a single keyword search. It means that if your query has nothing but the code, the boost factor 90 will be applied to your request, that is #2 after the exact name and exact EAN.

So there are two intertwined phases, or processes, in the search engine: fetching relevant results and sorting them. Look at the conditions again: all of them are joined by ORs. Once any condition is true, and the product will be included in the search results, but, possibly, far from the beginning.

In reply to my query, “camera lenses”, I got a number of product in unexpected order. According to the hybris search engine ( with the default configuration – not the best one), “Monopod 100” has overtaken “Schneider-Kreuznach Xenar 0.7X Wide Angle Lens, 55mm” which seems much more relevant.

Query:

This configuration says, for example, that

is involved in the phrase search, but it is not involved in a single keyword search. It means that if your query has nothing but the code, the boost factor 90 will be applied to your request, that is #2 after the exact name and exact EAN.

So there are two intertwined phases, or processes, in the search engine: fetching relevant results and sorting them. Look at the conditions again: all of them are joined by ORs. Once any condition is true, and the product will be included in the search results, but, possibly, far from the beginning.

In reply to my query, “camera lenses”, I got a number of product in unexpected order. According to the hybris search engine ( with the default configuration – not the best one), “Monopod 100” has overtaken “Schneider-Kreuznach Xenar 0.7X Wide Angle Lens, 55mm” which seems much more relevant.

Query:

Both products have both keywords in two fields, categoryName and description. All other fields either not mentioned in the request or not contain any of the keywords.

At this point, it should be clear how the search engine solves the first task, understanding what products have to be included into the response.

Next phase is ranking these products and displaying them ordered by relevancy.

For each document, the relevancy is calculated as a sum (only for one query builder in Hybris; Read here for details) of relevancy components, corresponding the specific conditions. For example, for the query above, we should sum up the following components:

Actually, all three Score formulas are the same. The only difference is a score factor, that can be different for Phrases, Fuzzy and Exact match (per field).

Solr has a debug flag that shows the scores behind the results. In order to see the scores for this request you should to:

Total score of Monopod is higher, and this is why its position is higher in the search results. But how these scores are calculated? What gives the win to the Monopod?

The answer is “all scores”:

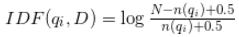

where n(qi) is a number of documents containing a word qi.

and N is a total number of documents (in our example N = 340).

For “camera”: qi = 108 documents. IDF(“camera”) will be 1.15.

For “lenses” qi = 52 documents. IDF (“lenses”) will be 1.87.

IDF = IDF (“camera) + IDF (“lenses”) = 1.15 + 1.87 = 3.02.

=> IDF = 3.02 for both terms.

For the phrase “camera lenses” IDF is calculated as a sum of IDF(“camera”) and IDF(“lenses”), and not depend on the number of documents containing a whole phrase.

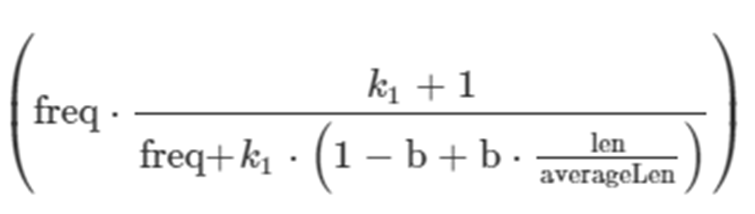

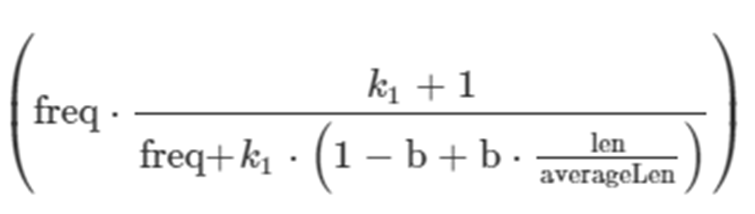

The term frequency is a simple factor. The more frequent a term occurs in a document, the greater it’s score. Documents with twice the number of terms as another document aren’t twice as relevant:

where n(qi) is a number of documents containing a word qi.

and N is a total number of documents (in our example N = 340).

For “camera”: qi = 108 documents. IDF(“camera”) will be 1.15.

For “lenses” qi = 52 documents. IDF (“lenses”) will be 1.87.

IDF = IDF (“camera) + IDF (“lenses”) = 1.15 + 1.87 = 3.02.

=> IDF = 3.02 for both terms.

For the phrase “camera lenses” IDF is calculated as a sum of IDF(“camera”) and IDF(“lenses”), and not depend on the number of documents containing a whole phrase.

The term frequency is a simple factor. The more frequent a term occurs in a document, the greater it’s score. Documents with twice the number of terms as another document aren’t twice as relevant:

where:

where:

It turned out that the short description for Monopod 100 makes the Term Frequency higher, and eventually, makes the higher position for the product.

This explains higher Monopod’s exact match, fuzzy match and phrase match scores. Just because this field is shorter, and the ratio len/averagelen is large, but once it is in the determinant, TFNorm is larger, and, consequently, the whole score is larger.

Certainly, other scores also worked, but their contribution into the final score is smaller.

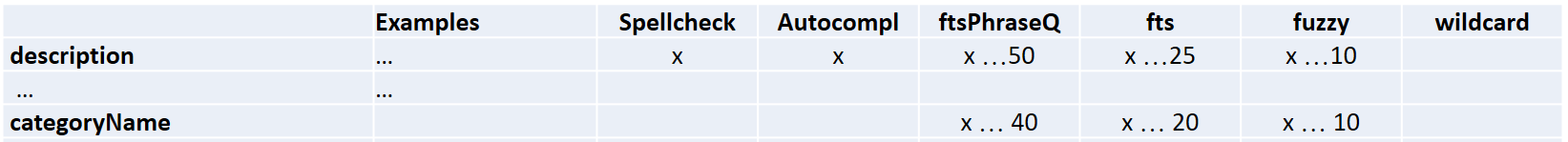

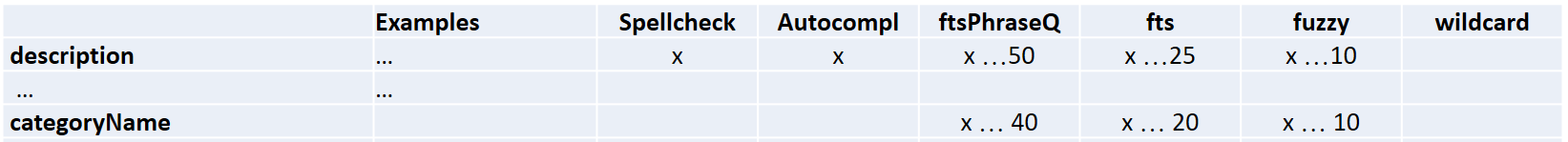

By the way, we have a category with the name “Camera Lenses” that is exactly what we looked for. From the business perspective, all the products from this category should be higher. However, the categoryName field has smaller boost factors than the description field:

Score(categoryName contain “camera lenses”) = (Boost40IDF3.17TFNorm0.85)=108.33

Score(description contain “camera lenses”) = (Boost50IDF3.01TFNorm0.85)=128.49

has a following set of filters:

There is a filter called SnowballPorterFilterFactory. This class dynamically loads Porter Stemmer algorithm that is generated from a set of rules. These rules are very simple, and a list of exceptions is limited. So, for the word “Lens” the stemmer creates a singular form, Len, that is not correct. A noun that ends with ” s” doesn’t necessarily mean it’s a plural. Porter knows about “news”, but doesn’t know “lens”.

Solr tries to find “lens” (as singular from lenses) in “name_string_en”, but finds only “len” that is the different word. This is why Lens in the product name doesn’t work. Synonyms would help there a lot.

This article is focused on Hybris 6.4 with Solr 6.1 support. Solr 6.1 is built on top of Lucene 6. Since Solr 6, Lucene uses BM25 as a default similarity method. I explained this algorithm above. In the previous versions of Lucene, Solr and old versions of SAP hybris, the default similarity class is based on simplified algorithm (see DefaultSimilarity, http://www.lucenetutorial.com/advanced-topics/scoring.html).

Hybris also disables TF/IDF scoring for some string fields by specifying custom similarity class. The reason is Hero products (the products with huge boost factor) that need to be appeared in the expected order in the list:

© Rauf Aliev, July 2017

Score(categoryName contain “camera lenses”) = (Boost40IDF3.17TFNorm0.85)=108.33

Score(description contain “camera lenses”) = (Boost50IDF3.01TFNorm0.85)=128.49

has a following set of filters:

There is a filter called SnowballPorterFilterFactory. This class dynamically loads Porter Stemmer algorithm that is generated from a set of rules. These rules are very simple, and a list of exceptions is limited. So, for the word “Lens” the stemmer creates a singular form, Len, that is not correct. A noun that ends with ” s” doesn’t necessarily mean it’s a plural. Porter knows about “news”, but doesn’t know “lens”.

Solr tries to find “lens” (as singular from lenses) in “name_string_en”, but finds only “len” that is the different word. This is why Lens in the product name doesn’t work. Synonyms would help there a lot.

This article is focused on Hybris 6.4 with Solr 6.1 support. Solr 6.1 is built on top of Lucene 6. Since Solr 6, Lucene uses BM25 as a default similarity method. I explained this algorithm above. In the previous versions of Lucene, Solr and old versions of SAP hybris, the default similarity class is based on simplified algorithm (see DefaultSimilarity, http://www.lucenetutorial.com/advanced-topics/scoring.html).

Hybris also disables TF/IDF scoring for some string fields by specifying custom similarity class. The reason is Hero products (the products with huge boost factor) that need to be appeared in the expected order in the list:

© Rauf Aliev, July 2017

That is why you need to test the search thoroughly after any change in the boost factors and tune the boosts…. but testing of the search is a topic for a separate article. Stay tuned!

The first relevant product is at the 10th position! This is the case despite the fact that some products have the words from the query both in the description and category name, such as the following one:

The first relevant product is at the 10th position! This is the case despite the fact that some products have the words from the query both in the description and category name, such as the following one:

In addition to that, this product has “Lens” in the title that should make it even higher in the search results since we state that the search supports different word forms.

In this article, I will explain why this is happening.

In addition to that, this product has “Lens” in the title that should make it even higher in the search results since we state that the search supports different word forms.

In this article, I will explain why this is happening.

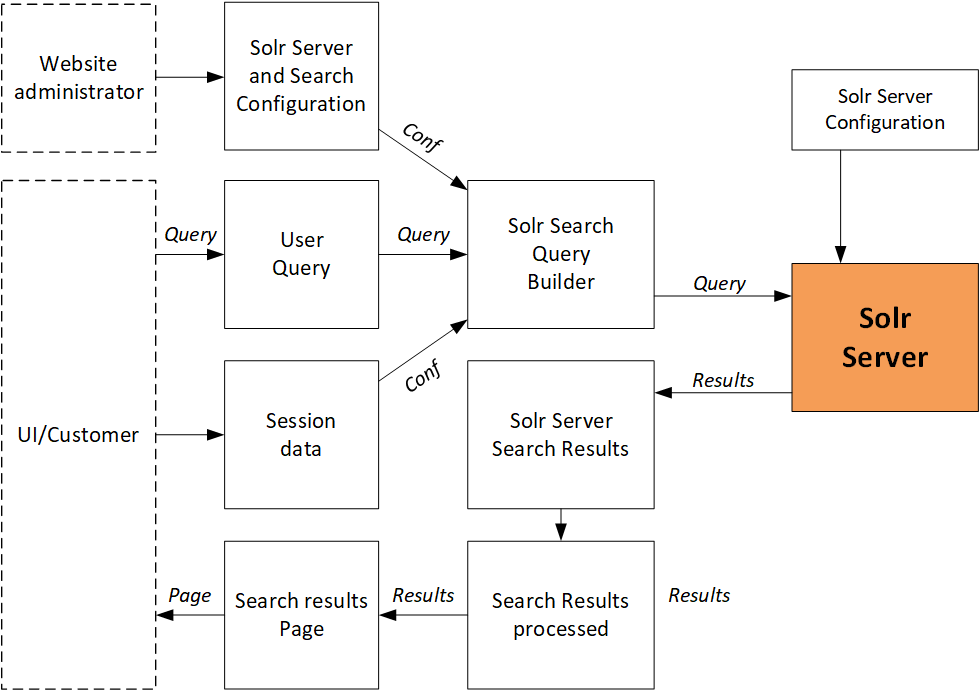

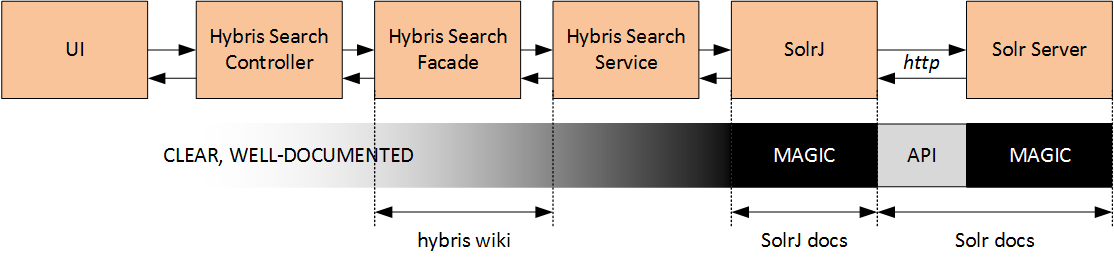

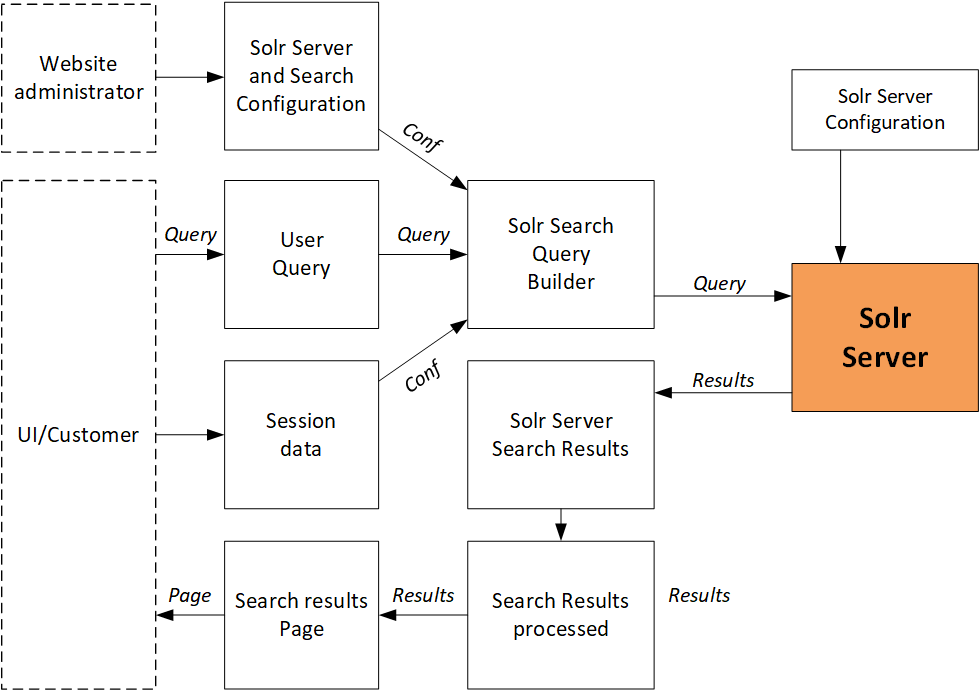

Hybris and Solr integration

Hybris uses Solr for a product search. For every user search request and some navigation requests, hybris calls the search engine. There are three interfaces between hybris and Solr:

There are three interfaces between hybris and Solr:

- Indexing

- Search

- Autosuggest and spellcheck

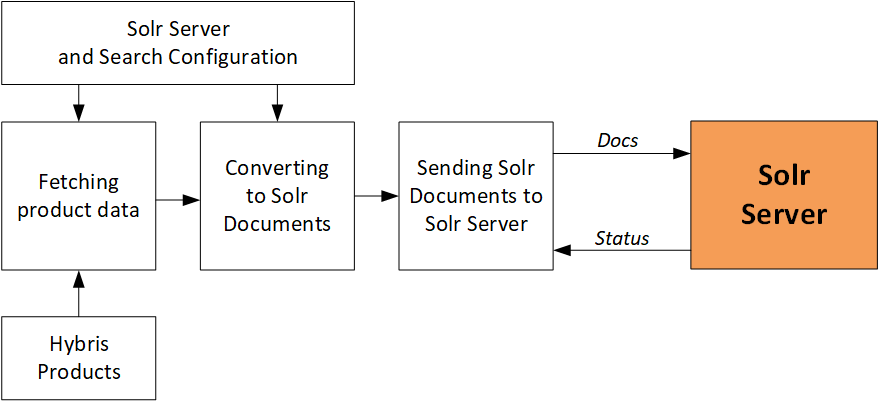

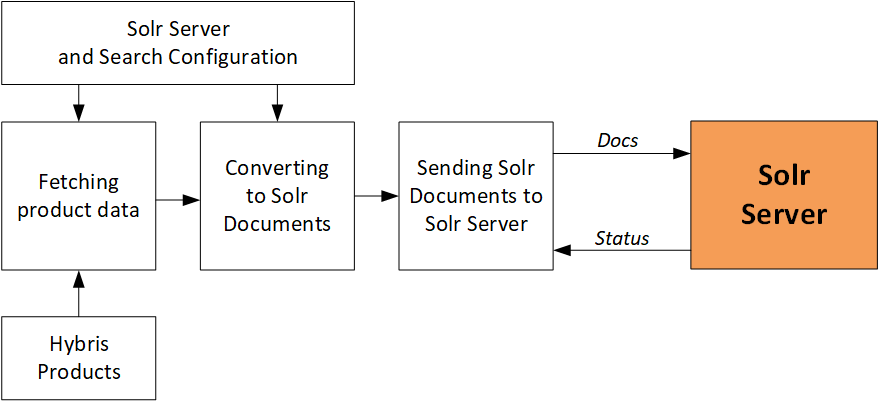

Indexing

Hybris fetches data from the database, converts them into SolrJ documents, and sends to Solr Server in batches (100 documents each). There are overridable listeners executed before and after batches. There are also listeners before and after the whole indexing process. Full indexing recreates the index. For the update mode, only new and changed products are processed.

Search

For the search, the system converts the customer search query into a Solr Search Query.

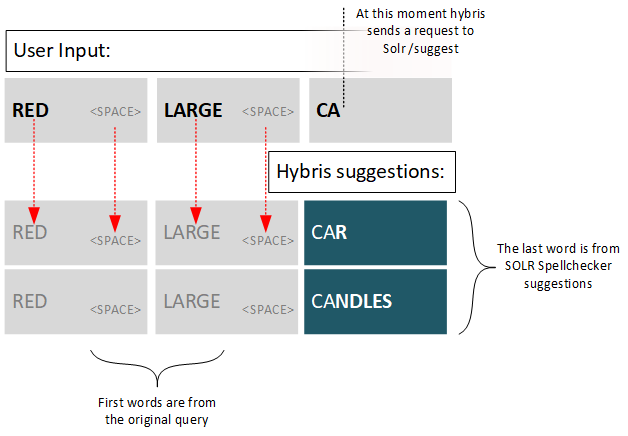

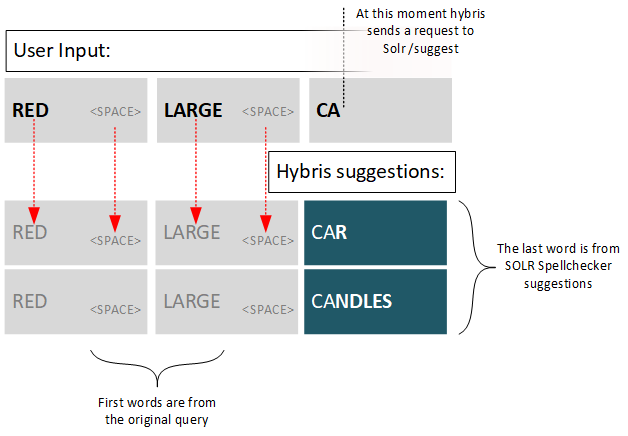

Autosuggest

Autosuggestion / autocomplete is built on the spellcheck functionality. Solr has Suggester component specially designed for this purpose, but for some reason Hybris decides to go with the old reliable spellcheck component. How does hybris find words for “CA…”? there is a Solr multivalued field called “autosuggest” (and its language versions autosuggest_) of the “text_spell” type. This type is defined as Solr standard TextField type. It contains lowercased tokens (words) from the product attributes configured as “autosuggest” indexed properties.

How does hybris find words for “CA…”? there is a Solr multivalued field called “autosuggest” (and its language versions autosuggest_) of the “text_spell” type. This type is defined as Solr standard TextField type. It contains lowercased tokens (words) from the product attributes configured as “autosuggest” indexed properties.

Spellcheck

Spellchecking uses the similar Solr interface. For spellcheck fields hybris uses a different set of product attributes.* * *

However, there are grey areas which are not documented well. For example, for a single-word request, Hybris generates a comprehensive multi-line query containing various filters and conditions. Understanding of this mechanism is crucial for troubleshooting, solutioning and customization. Solr logs help to understand the real queries generated by hybris. For the default installation, the logs are here:hybris\log\solr\instances\default\solr.log

Each line represents a condition. The number at the end is a boost factor used in the relevancy calculation. Boost factor is used to increase or decrease the importance in the filter in the query. Below I will show how this value is used in the relevancy calculation for search result ranking.

The Solr query for the sample query

Each line represents a condition. The number at the end is a boost factor used in the relevancy calculation. Boost factor is used to increase or decrease the importance in the filter in the query. Below I will show how this value is used in the relevancy calculation for search result ranking.

The Solr query for the sample query

kw1 kw2

For three and more words, the search request has a condition for the whole set of the words as a single phrase. For example, for the request “kw1 kw2 kw3” the “black” section contains three words, and the blue and red sections have

For three and more words, the search request has a condition for the whole set of the words as a single phrase. For example, for the request “kw1 kw2 kw3” the “black” section contains three words, and the blue and red sections have

kw3

(...single word conditions ...) ... OR ((code_string:"kw1 kw2 kw3 kw4"^90.0) OR (keywords_text_en:"kw1 kw2 kw3 kw4"^40.0) OR (manufacturerName_text:"kw1 kw2 kw3 kw4"^80.0) OR (description_text_en:"kw1 kw2 kw3 kw4"^50.0) OR (categoryName_text_en_mv:"kw1 kw2 kw3 kw4"^40.0) OR (ean_string:"kw1 kw2 kw3 kw4"^100.0) OR (name_text_en:"kw1 kw2 kw3 kw4"^100.0))" ...You may expect that for the request “compact Sony cameras“, the subphrases “compact Sony” and “Sony cameras” will be taken into account by the search engine and affect the relevancy. This is not so: only the whole phrase “company Sony cameras” is considered as a phrase having specific boost factors.

Search ranking in hybris

Fine, now we are at the best part. Why lenses are at the 10th position? Look at the queries above. They have some magic numbers after “^” sign. These numbers are boost factors. You can change them for any indexed field in the Backoffice. Specifically, there are four boost factors used in the queries:- Single keyword full-text search boost factor (ftsQueryBoost)

- Phrase query boost factor (ftsPhraseQueryBoost)

- Fuzzy query boost factor (ftsFuzzyQueryBoost)

- Wildcard Query boost factor (ftsWildcardQueryBoost)

This configuration says, for example, that

This configuration says, for example, that

code

camera lenses

| Monopod 100 | Schneider-Kreuznach Xenar 0.7X Wide Angle Lens, 55mm |

| description: “Canon Monopod 100 for SLR Cameras & Lenses“ | description: Put this wide-angle lens in your camera bag and soon you’ll be putting spectacular photos on your wall. Our SCHNEIDER-KREUZNACH XENAR 0.7X Wide-Angle Lens increases your angle of view a full 30 percent. Features -0.7X magnification (equivalent to 26.6 mm). -55 mm thread size. -4-element, all-glass SCHNEIDER-KREUZNACH XENAR Lens. -Protective storage pouch. Capture breathtaking outdoor vistas like expansive ocean cliffs, a city skyline, or the crowd cheering their favorite team at the stadium. Or use it indoors to capture entire rooms—from piano recitals to the amazing exhibits at the Louvre. This all-glass, 4-element lens is developed in conjunction with SCHNEIDER-KREUZNACH so you know your pictures will be extremely sharp. Our camera lenses work together to deliver the high-quality pictures you expect. |

| categoryName: “Digital SLR”, “Cameras”, “Tripods”, “Open Catalogue”, “Digital Cameras” | categoryName: “Cameras”, “Digital SLR”, “Digital Cameras”, “Camera Lenses”, “Open Catalogue” |

| Monopod 100 | Schneider-Kreuznach Xenar 0.7X Wide Angle Lens, 55mm | |

| Exact match check: | ExactScore ( “camera” in description, “camera” in categoryName) | ExactScore ( “camera” in description, “camera” in categoryName) |

| ExactScore ( “lens” in description ) | ExactScore ( “lens” in description “lens” in categoryName) | |

| Fuzzy match check: | FuzzyScore ( “camera” in description, “camera” in categoryName) | FuzzyScore ( “camera” in description, “camera” in categoryName) |

| FuzzyScore ( “lens” in description ) | FuzzyScore ( “lens” in description “lens” in categoryName) | |

| Phrase match check: | PhraseScore ( “camera lens” in description ) | PhraseScore ( “camera lens” in description “camera lens” in categoryName) |

- Extract the Solr request from the Solr log

- Perform this request in Solr with the debug on

- Find the score calculation in the response along with the search results

| Monopod 100 TOTAL SCORE=401.42 | Schneider-Kreuznach Xenar 0.7X Wide Angle Lens, 55mm TOTAL SCORE=238.97 | |

| Exact match check: | ExactScore=46.493 (=max of “camera” in description (46.493), “camera” in categoryName(7.6) ) | ExactScore=35.191 (=max of “camera” in description(35.191), “camera” in categoryName(9.02)) |

| ExactScore=76.045 (=max of “lens” in description(76.045) ) | ExactScore=48.828 (=max of “lens” in description (39.926) “lens” in categoryName(48.828)) | |

| Fuzzy match check: | FuzzyScore=18.597 (=max of “camera” in description(18.597), “camera” in categoryName(3.84)) | FuzzyScore=14.076 (=max of “camera” in description (14.076), “camera” in categoryName (4.511)) |

| FuzzyScore=15.209 (=max of “lens” in description(18.597) ) | FuzzyScore=12.207 (=max of “lens” in description (7.985) “lens” in categoryName (12.207)) | |

| Phrase match check: | PhraseScore=245.077 (max of “camera lens” in description(245.077) ) | PhraseScore=128.673 (=max of “camera lens” in description(128.673) “camera lens” in categoryName(108.335)) |

- Total exact score of the Monopod is higher than the same for the lens

- Total Fuzzy score is also higher

- Total Phrase score is also higher

- Total Score = Sum ( Scores )

- Where Score = Boost * TF * IDF

- Where TF is Normalized Term Frequency. How often the term occur in the document.

- Where IDF is Inversed Document Frequency. How special term, how rare it is. How many documents a term appears in. “Inversed” means the following: very frequent terms have smaller values of IDF.

- Original request: “camera lenses”

- Results:

- Monopod, TOTAL SCORE=401.42

- Lens, TOTAL SCORE=238.97

- The most influencing component is “camera lens” in description

- Score = Boost * function (TF, IDF)

where:

where:

- freq is term frequency in the document = 1 for both cases, because the phrase “camera lenses” is used once per document

- k1 is a constant; k1 = 1.2;

- b is a constant; b = 0.75;

- len is a length of the field

- averageLen is an average length of this field in all records.

| Monopod 100 | Schneider-Kreuznach Xenar 0.7X Wide Angle Lens, 55mm |

| freq=1 | freq=1 |

| k1=1.2 | k1=1.2 |

| b=0.75 | b=0.75 |

| average Field Length= 115.01 | average Field Length= 115.01 |

| len = 42 (before normalization) | len = 826 (before normalization) |

| TF Norm = 1.6228 | TF Norm = 0.8520 |

Score(categoryName contain “camera lenses”) = (Boost40IDF3.17TFNorm0.85)=108.33

Score(description contain “camera lenses”) = (Boost50IDF3.01TFNorm0.85)=128.49

Score(categoryName contain “camera lenses”) = (Boost40IDF3.17TFNorm0.85)=108.33

Score(description contain “camera lenses”) = (Boost50IDF3.01TFNorm0.85)=128.49

Lens in the name?

You may ask, what about “Lens” in the name? Why it doesn’t take it into account? We see that “Lenses” is a plural form of “lens”, and the search has a stemming filter which is able to process word forms. Indeed, yes and no. The name of the product “Schneider-Kreuznach Xenar 0.7X Wide Angle Lens, 55mm” is stored in the Solr field “name_string_en” that dynamically assigned to a type text_en:<dynamicField name="*_text_en" type="text_en" indexed="true" stored="true" />

text_en

<fieldType name="text_en" class="solr.TextField" positionIncrementGap="100">

<analyzer>

<tokenizer class="solr.StandardTokenizerFactory" />

<filter class="solr.StopFilterFactory" words="lang/stopwords_en.txt" ignoreCase="true" />

<filter class="solr.ManagedStopFilterFactory" managed="en" />

<filter class="solr.SynonymFilterFactory" ignoreCase="true" synonyms="synonyms.txt"/>

<filter class="solr.ManagedSynonymFilterFactory" managed="en" />

<filter class="solr.WordDelimiterFilterFactory" preserveOriginal="1" generateWordParts="1" generateNumberParts="1" catenateWords="1" catenateNumbers="1" catenateAll="0" splitOnCaseChange="1" />

<filter class="solr.LowerCaseFilterFactory" />

<filter class="solr.ASCIIFoldingFilterFactory" />

<filter class="solr.SnowballPorterFilterFactory" language="English" />

</analyzer>

</fieldType>

<analyzer>

<tokenizer class="solr.StandardTokenizerFactory" />

<filter class="solr.StopFilterFactory" words="lang/stopwords_en.txt" ignoreCase="true" />

<filter class="solr.ManagedStopFilterFactory" managed="en" />

<filter class="solr.SynonymFilterFactory" ignoreCase="true" synonyms="synonyms.txt"/>

<filter class="solr.ManagedSynonymFilterFactory" managed="en" />

<filter class="solr.WordDelimiterFilterFactory" preserveOriginal="1" generateWordParts="1" generateNumberParts="1" catenateWords="1" catenateNumbers="1" catenateAll="0" splitOnCaseChange="1" />

<filter class="solr.LowerCaseFilterFactory" />

<filter class="solr.ASCIIFoldingFilterFactory" />

<filter class="solr.SnowballPorterFilterFactory" language="English" />

</analyzer>

</fieldType>

<fieldType name="string" class="solr.StrField" sortMissingLast="true" omitNorms="true">

<similarity class="de.hybris.platform.lucene.search.similarities.FixedTFIDFSimilarityFactory" />

</fieldType>

<similarity class="de.hybris.platform.lucene.search.similarities.FixedTFIDFSimilarityFactory" />

</fieldType>

That is why you need to test the search thoroughly after any change in the boost factors and tune the boosts…. but testing of the search is a topic for a separate article. Stay tuned!

Ramy

17 July 2017 at 09:06

Hi, this is very informative article, with very good explanation for how the Solr and Hybris interact, specially the part about the algorithms.

Just have one recommendation, you can use solrQueryDebuggingListener for debugging the Solr query from Hybris instead of extracting the query and pasting it in Solr admin.

Thanks.

Navigation | hybrismart | SAP hybris under the hood

17 July 2017 at 19:31

[…] SOLR (partial update, multi-line product search, static pages and products in the same list, solr 6 in 5.x, 90M personalized prices, 500K availability groups, solr cloud, highlighting, 2M products/marketplace, more like this, concept-aware search: automatic facet discovery), explaining relevance ranking for phrase queries […]

Vishal

21 July 2017 at 03:35

Hi,

Nice article. I have a question about how will I achieve relevance search on multi-value fields?

Because I have custom multi-value field in solr hybris configuration.

Thanks in advance

Rauf Aliev

21 July 2017 at 08:12

Via concatenation like all values are one long string. For example, category field is multi value: product can be assigned to more than one category. From the search engine point of view, the sets of terms for multivalue and for single value are the same

Zalak

5 September 2017 at 09:34

Hi,

I have question regarding solr query parameter, can i give dynamic value in solr query using custom request handler in hybris?

Thanks

Rauf Aliev

5 September 2017 at 09:39

What kind of dynamic value do you mean? All values are basically dynamic…

Zalak

6 September 2017 at 03:06

Hi,

Actually, I want to tell solr to document only those cart which are ideal and not converted into the order from more than one day. I don’t want to indexed whole cart data just like product data. Time duration will be configurable in solr search query which i think can be done using custom request handler.

Thanks

Max

12 September 2017 at 10:19

Hi

Is there a way to ensure that results match all terms across any of the fields?

For instance, using your search, I don’t want to see any products which match only ‘camera’ and don’t have ‘lens’ in any fields (or vice versa)

Alternatively, could you return results which reach a minimum relevancy score threshold?

Thanks

Rauf Aliev

12 September 2017 at 06:36

If you need to have the documents with all terms from the query, you need to use AND-search rather than OR-search explained in the article. Score threshold is a bad idea. The score formula may produce very different scores, and absolute value means nothing.

Max

2 October 2017 at 13:28

Thanks for your speedy reply!

Where would the AND statements appear? If I look at the example above, there are roughly 30 different queries across the different facets. There needs to be a mixture of AND/OR…

Dagmara

25 October 2017 at 19:04

Hi Rauf, my search has a few indexed fields with different boosts. A client asked why products with summary containing specific keyword are not showing up. Summary has a boost value 1. Model number has boost 10. If I increase the boost of summary to 5, the number of products returned from the search increases and the ones that have the keyword in summary BUT no model number show up. With boost 1, they don’t. I can’t figure out why because I can see the summary in the solr query. Is there some relevancy threshold limit that ignores results? Thanks!

Rauf Aliev

25 October 2017 at 20:23

Looks strange, but the first thing I would do is to turn solr debug on to see at the parsed query and relevancy calculation. Also it’s important to check what query builder is active